All Software Is Not Born Equal

April 14, 2026

Article by Haresh Vazirani, Managing Director, Patria Private Market Solutions, March 2026

Software ≠ Software: A More Nuanced View of AI Disruption

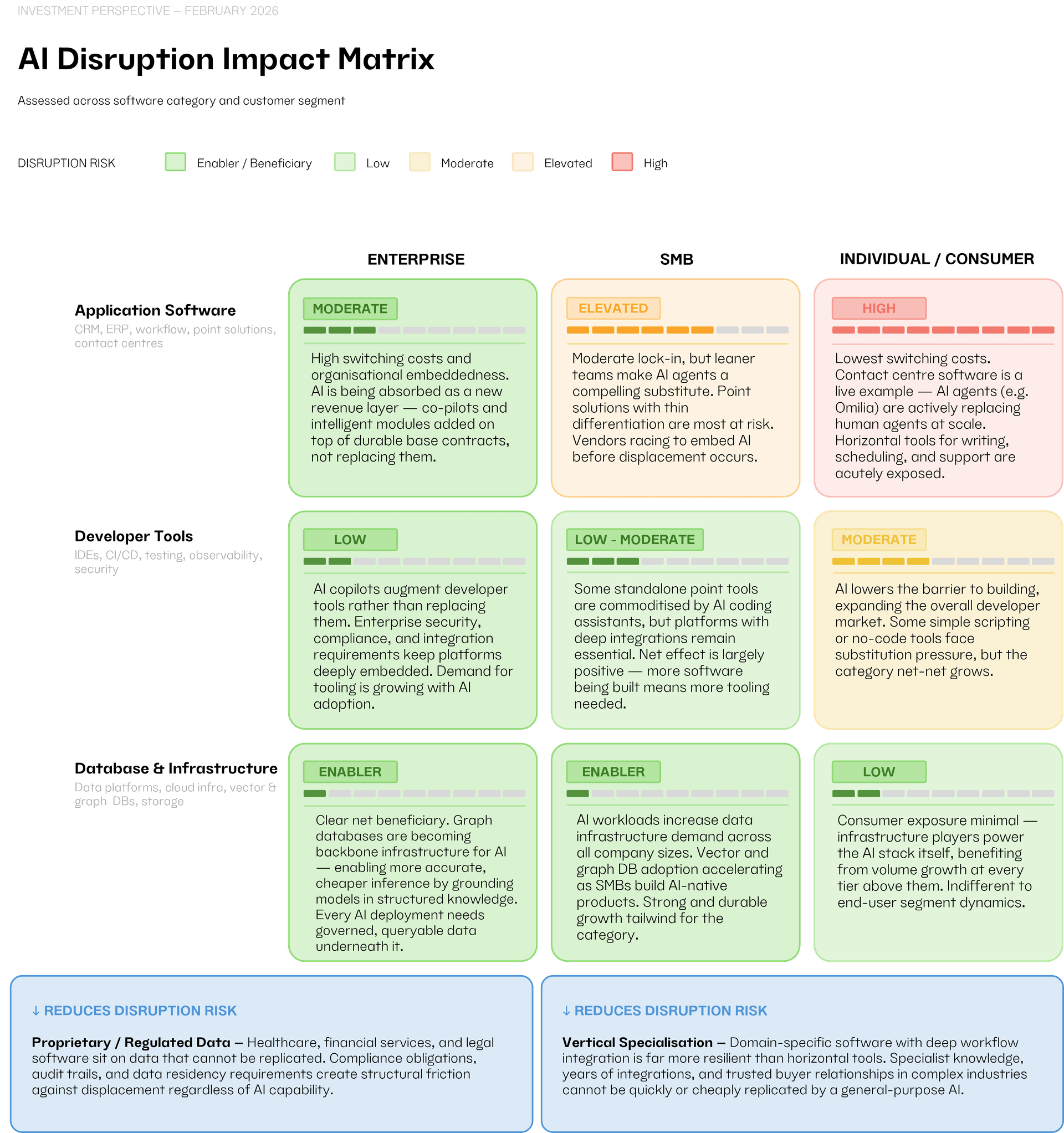

The conversation about AI disrupting software is legitimate but it is being applied too broadly. Over the past few weeks, the market has treated software as a single category under threat, when the picture is likely to be far more varied. The type of software, the customer it serves, and the data set it uses all determine how exposed any given business is. Getting these distinctions right matters.

Some software businesses may face genuine and near-term disruption risk. Others are likely to be structurally well-protected. And a third group, infrastructure and developer tools, is actively benefiting from the AI wave. The challenge is that these three groups are being discussed as one.

Application software, the tools that run day-to-day workflows, serve customers, and manage operations, we believe, is the layer most directly in the line of fire. The disruption here is not hypothetical. Contact centres are an immediate example: AI conversation agents are replacing human agents at scale with economics compelling enough that adoption will only accelerate. Point solutions in document drafting, customer support, and routine data processing face similar pressure. Where AI could replicate the core function of a software tool, and the customer has limited switching costs, the risk is real.

That said, not all application software is equally exposed. The risk is likely highest for horizontal point solutions serving consumers or freelancers. Enterprise application software, deeply embedded, with high switching costs and complex implementation requirements, is in a different position entirely, which we address below.

Developer tools and data infrastructure are not being disrupted by AI, they are being made more essential by it. Every AI-native product requires infrastructure to run on. Every model requires data to be stored, queried, and governed. The growth in AI workloads is a direct tailwind for this part of the tech stack, and demand is accelerating across observability, security, and developer platform categories.

Within infrastructure, graph databases deserve particular attention. Two of the most pressing challenges with AI today are accuracy and cost — models hallucinate, and inference at scale is expensive. Graph databases address both directly. By grounding AI models in structured, interconnected knowledge graphs, organizations could significantly improve the reliability of outputs while reducing the volume of tokens processed, which cuts cost. This is fast becoming a core architectural requirement for serious enterprise AI deployments, not a niche consideration.

One factor that is being consistently underweighted in the current debate is the role of proprietary data. Software businesses built on data that cannot be replicated. Years of patient outcomes in healthcare, transaction-level financial data, historical legal records have a form of protection that a general-purpose AI model simply cannot overcome. The data itself is the competitive advantage, and it compounds over time.

Regulation reinforces this further. In financial services, healthcare, and legal, compliance requirements, audit obligations, and data residency rules create substantial friction against switching to new solutions, regardless of their technical capability. Incumbent vendors in these verticals are often more durable than they appear on the surface. The regulatory environment that feels like a burden in normal times becomes a meaningful barrier to disruption when technology shifts.

Replacing enterprise software is fundamentally an organizational challenge, not a technical one. It requires renegotiating contracts, migrating years of data, retraining teams, and absorbing implementation risk that can run into the tens of millions of dollars. Large enterprises do not move quickly, and the cost of a failed transition is high. These switching costs are a structural feature of the enterprise software model, and they remain intact in an AI world.

The stronger enterprise software vendors are also not passive observers. Leading platforms across ERP, CRM, and HCM have been building AI capabilities directly into their products — intelligent modules, workflow co-pilots, and predictive analytics layered on top of existing contracts. A useful way to think about this is the “speedboat within a mothership” dynamic: inside many large enterprise software businesses, an AI-powered division is growing rapidly, operating with the pace and focus of a startup while benefiting from the distribution, customer relationships, and data of the parent. For many of these businesses, AI is becoming a fast-growing new revenue stream rather than an existential threat. Their customers are likely to consume AI through the platforms they already use, not by replacing them. Take the example of Agentforce, Salesforce’s AI product, which has grown to $800m in ARR, having debuted only 18 months ago. Agentforce grew 50% QoQ and signed 29,000 new deals in the last quarter*.

It is worth stepping back and remembering that the software industry has navigated major technology transitions before. The shift from on-premise to cloud and SaaS was, at the time, described in similarly existential terms. Established vendors were going to be wiped out. The economics of the industry were going to collapse. Neither happened.

What happened was more interesting. Disruption did occur. Some businesses did not make it, and some categories were permanently altered. But the vendors who adapted came out structurally stronger. SaaS economics turned out to be better than on-premise: recurring revenue, lower distribution costs, faster product iteration, and significantly higher margins. The transition was painful in parts, but the industry that emerged was healthier than the one that entered it.

There is good reason to believe AI will follow a similar pattern. The software companies that integrate AI thoughtfully, using it to automate lower-value tasks, improve product quality, and reduce the cost of delivery, stand to come out with better unit economics than they have today. AI is not just a threat to the software business model; for those who move well, it is an opportunity to improve it.

Generative AI models are increasingly functioning as a base software layer. They can draft, summarize, classify, reason, and automate at a level that was not possible two years ago. This has led many organizations to ask whether they should build their own AI-powered tools rather than buy from vendors. It is a reasonable question, and many are attempting it.

But building on top of a foundation model is only the beginning. Managing model performance, handling updates and version changes, tailoring outputs to specific workflows, ensuring security and compliance, and integrating with existing systems all require sustained engineering effort and expertise. The base layer is accessible; everything built on top of it is not trivial. Most organisations are not set up to do this well on an ongoing basis.

The history of enterprise technology is instructive here. Organisations have consistently overestimated their ability to build and maintain custom software and underestimated the total cost of doing so. The pattern is familiar: internal teams build something that works initially, the cost and complexity of maintaining it grows, priorities shift, and eventually the organisation buys the solution it should have bought in the first place. AI is unlikely to be different. The buy-versus-build debate will be live for the next few years, but the direction of travel is well-established.

The software most exposed to AI disruption tends to sit at a particular intersection: horizontal in nature, serving consumers or SMBs with low switching costs, and without any proprietary data advantage. That combination offers the least protection, and it is where AI agents can most readily substitute for existing solutions.

Vertical software, built for a specific industry, with deep workflow integration and domain expertise accumulated over years is considerably more resilient. The same pattern holds as you move up market: consumer software is easier to disrupt than SMB, and SMB is easier to disrupt than enterprise. The larger and more complex the organisation, the more the incumbent’s advantages compound.

A further dimension worth noting is the resilience of omnichannel business models, those that combine software with a physical or human layer that AI cannot simply replace. Uber is a useful example: however capable AI becomes it cannot put a driver in a car. The platform is real, but it is inseparable from the physical network it coordinates. Businesses with this kind of omnichannel architecture are structurally less exposed, because disruption would require replacing not just the software but the entire operational model it sits on top of.

The matrix below maps disruption exposure across software categories and customer segments. The variation is significant and it is precisely that variation which the current market debate is failing to capture.

Conclusion

AI will reshape the software industry. But reshaping is not the same as dismantling. The businesses best positioned to navigate this shift share one or more of the following characteristics: they serve large, complex customers with high switching costs; they sit on proprietary or regulated data that cannot be replicated; they operate in verticals where domain expertise and trust matter; and they are actively embedding AI into their products rather than waiting to be disrupted by it.

The businesses at greatest risk are those on the opposite end of each of these dimensions: horizontal, consumer-facing, with generic data and limited lock-in. For them, the disruption is real and the timeline is short.

As with the transition to cloud before it, the software industry will not emerge from this period diminished. The companies that adapt well will come up with stronger products, better economics, and deeper customer relationships than they had going in. The transition will be uneven and, in places, painful, but the destination is not the end of software. It is a better version of it.

Against this backdrop, we see a compelling investment thesis forming. Domain-expert-led businesses may represent an area of potential interest, depending on investor risk appetite and market conditions. Building AI-enabled businesses in industries with large areas of regulated or proprietary data — where the combination of deep sector knowledge and AI capability creates a durable advantage that generalist competitors cannot easily replicate. This is not a thesis about software alone; it is a thesis about the intersection of AI and domain expertise across a wide range of traditional industries.

Many traditional industries are at an early stage of AI-driven transformation — healthcare, financial services, legal, logistics, infrastructure, and beyond. The pace of change is accelerating, and the window to gain exposure to the most compelling opportunities is narrowing. To access these changes well, it is important to invest alongside sector specialist managers and domain experts who understand the nuances of these industries, rather than relying on generalist investors applying a uniform lens.

We therefore seek to build exposure to market-leading AI-enabled or AI-driven businesses that are operating at this intersection, combining proprietary data, domain expertise, and AI capability in ways that are hard to displace. These are the businesses we believe will define the next era of technology-driven value creation.

Disclaimer: This document is provided for information purposes only and does not constitute investment advice, a recommendation, or an offer to buy or sell any financial product. Any forward‑looking statements involve risks and uncertainties. Past performance or historical trends do not guarantee future outcomes. This content is intended for professional investors only.

*Source Salesforce Q4 2026 financial results